Abstraction Fallacy: Why Google DeepMind Just Declared AI Consciousness Impossible

Google DeepMind Senior Scientist Alexander Lerchner Just released a paper that proves AI consciousness is impossible calling it Abstraction Fallacy.

The tech industry is obsessed with the idea of AGI "waking up." But while Silicon Valley billionaires debate the ethics of "AI rights" and existential sentience, a Senior Scientist at Google DeepMind, Alexander Lerchner, just published a paper that pours cold water on the entire philosophical debate.

His paper proves that AI consciousness isn't just unlikely, it is structurally and mathematically impossible. He calls the opposing belief the "Abstraction Fallacy."

Mapmaker Dependency

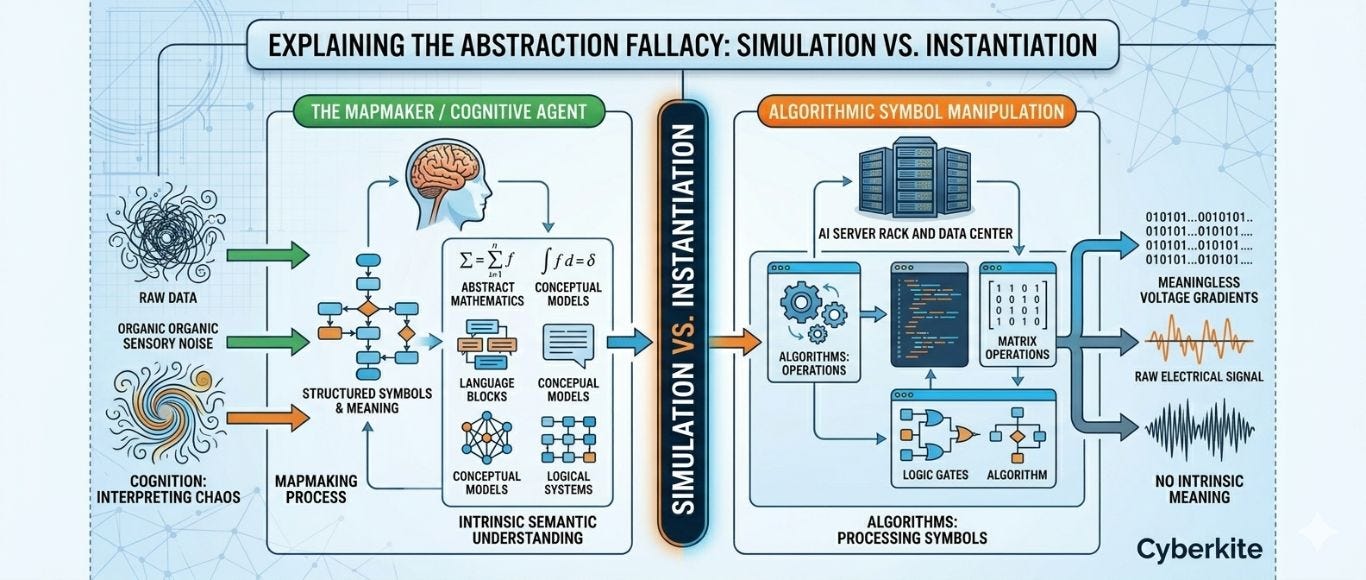

The core of Lerchner's argument is that computational systems do not possess intrinsic meaning. When a Large Language Model (LLM) generates a flawless essay or writes complex code, the machine itself understands nothing.

Computation is a description of a process. For something to compute, a conscious observer (a human) must first step in as the "Mapmaker." We have to carve physical reality into symbols and assign them meaning. Without a conscious human to interpret the output, an AI server rack is nothing but electrical voltage gradients shifting across a silicon substrate.

Photosynthesis Metaphor

The most powerful concept in the paper is this simple analogy:

If you build a perfect, trillion-parameter simulation of photosynthesis on a GPU, it will map every photon, every chemical reaction, and every cellular process perfectly. But no matter how powerful you make that GPU, it will never produce a single drop of actual glucose. Simulation is not instantiation. Maps do not become territory.

Scaling a model, adding more parameters, more compute, and more data, cannot change the fundamental category of what the machine is doing. Syntactic architecture can simulate behavior, but it cannot instantiate subjective experience.

Enterprise Reality Check

Why does this matter for IT and Business Leaders? Because it ends the distraction.

The "AI welfare trap" and science-fiction doomsday scenarios have dominated the narrative, pulling focus away from the actual threats. DeepMind’s paper provides a rigorous, physically grounded refutation of AI sentience. It confirms that highly capable AGI will not be a novel moral patient; it will simply be a highly sophisticated, non-sentient tool.

It is time to stop debating AI consciousness and start rigorously managing AI Governance. The threats aren't sentience, they are data privacy, copyright, integration debt, and security vulnerabilities.

The machines aren't waking up. Let's govern them accordingly.

Happy Computing human

Regards

Michael Plis

References

References to dive deeper into the Abstraction Fallacy:

📄 The DeepMind Paper: Read Alexander Lerchner’s full paper, "The Abstraction Fallacy: Why AI Can Simulate But Not Instantiate Consciousness.": https://deepmind.google/research/publications/231971/

📰 404 Media Breakdown: Great coverage on why this proves AGI will only ever be a "non-sentient tool" and why we need to stop getting distracted by Sci-Fi narratives: https://www.404media.co/google-deepmind-paper-argues-llms-will-never-be-conscious/