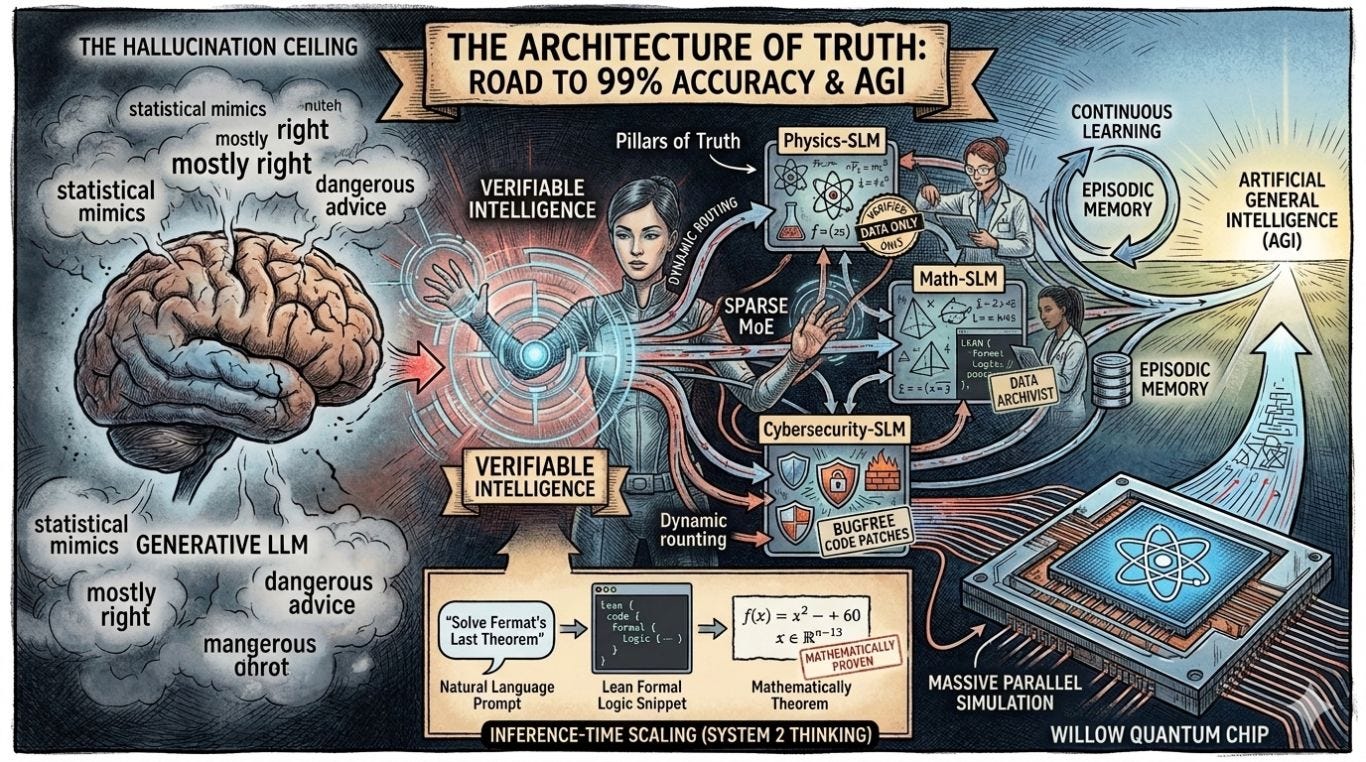

Architecture of Truth: Why Specialized SLMs & MoE are the Keys to AGI

The era of "mostly accurate" AI is ending. To reach 99% reliability, we are moving away from the giant monolith and toward a verified network of experts. This article explores this need further.

How AI happened and where we're at?

In 2017, Google researchers published a paper called "Attention Is All You Need" (By Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, Illia Polosukhin). It introduced the Transformer, the foundational engine that made everything from ChatGPT to Gemini possible. But almost a decade later, we have hit a wall: The Hallucination Ceiling.

Large Language Models (LLMs) in their current form are ultimately "statistical mimics." They predict the next most likely word based on a massive, often messy, pool of scraped internet data. This is perfectly fine for writing a poem, drafting an email, or brainstorming marketing copy. But it is incredibly dangerous for generating medical advice, writing cybersecurity patches, or conducting structural engineering.

To reach Artificial General Intelligence (AGI), a system that meets or exceeds human-level reasoning across all fields, we don't just need more data. We need a radical restructuring of how AI "thinks."

We are moving away from "Generative Intelligence" that guesses, and toward "Verifiable Intelligence" that proves.

The Death of the Monolith: MoE and SLMs

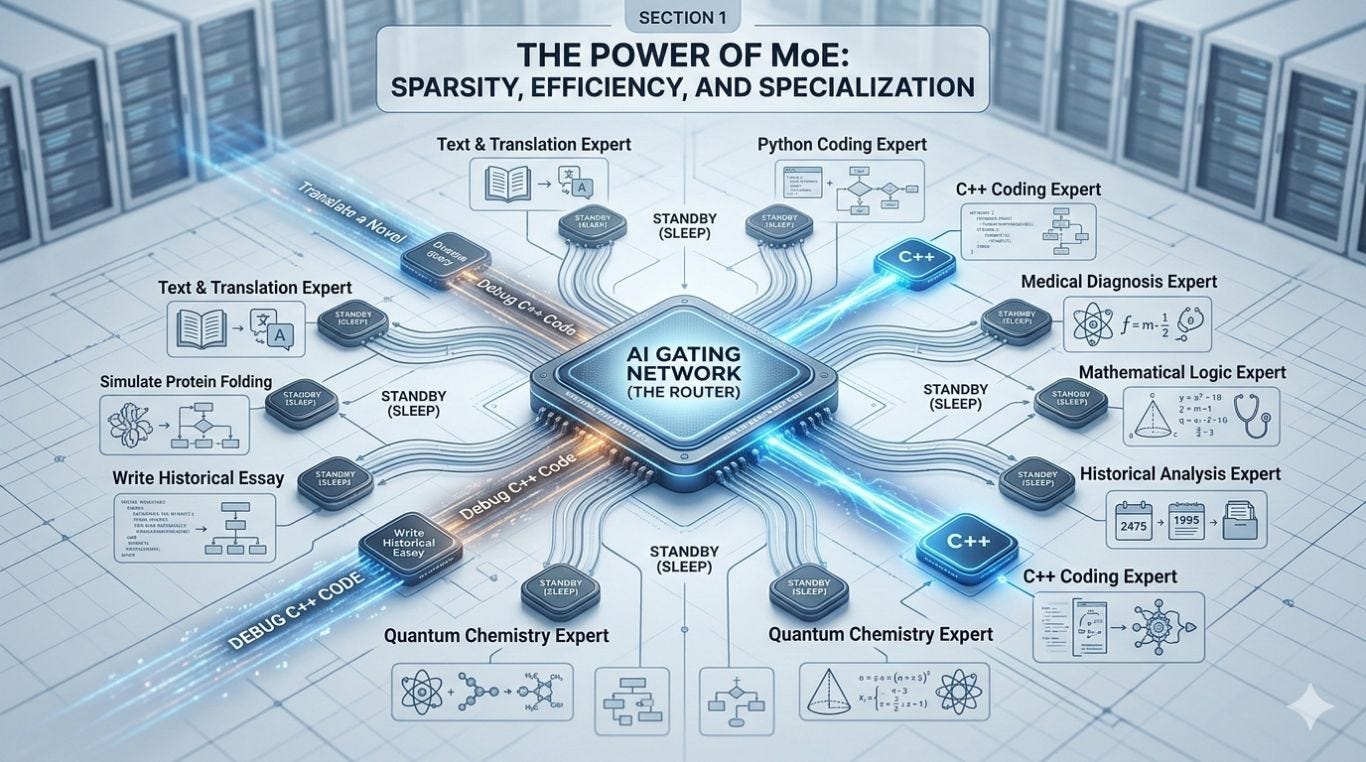

For years, the industry trend was simply "bigger is better." But a monolithic model that tries to memorize the entire internet is often a model that masters nothing. The future of architecture relies on two key components: Mixture of Experts (MoE) and Small Language Models (SLMs).

Power of MoE (Mixture of Experts)

In an MoE system, the neural network is divided into specialized sub-networks. Instead of activating every single parameter to answer a simple prompt, the model uses a dynamic routing mechanism that selectively activates only the most relevant "expert" pathways.

We are already seeing the evolution of this in action with Google’s current Gemini 3.1 Pro and the imminent shift toward the 3.5 architecture. These models utilize an advanced, hyper-sparse Mixture-of-Experts architecture. This allows the total model capacity to scale massively while keeping the computational cost incredibly low, enabling breakthroughs like real-time reasoning and massive, multi-million token context windows without crashing the grid. When you ask a question about quantum mechanics, the "general" brain doesn't guess; it delegates the task to a highly verified "expert" subset of neurons.

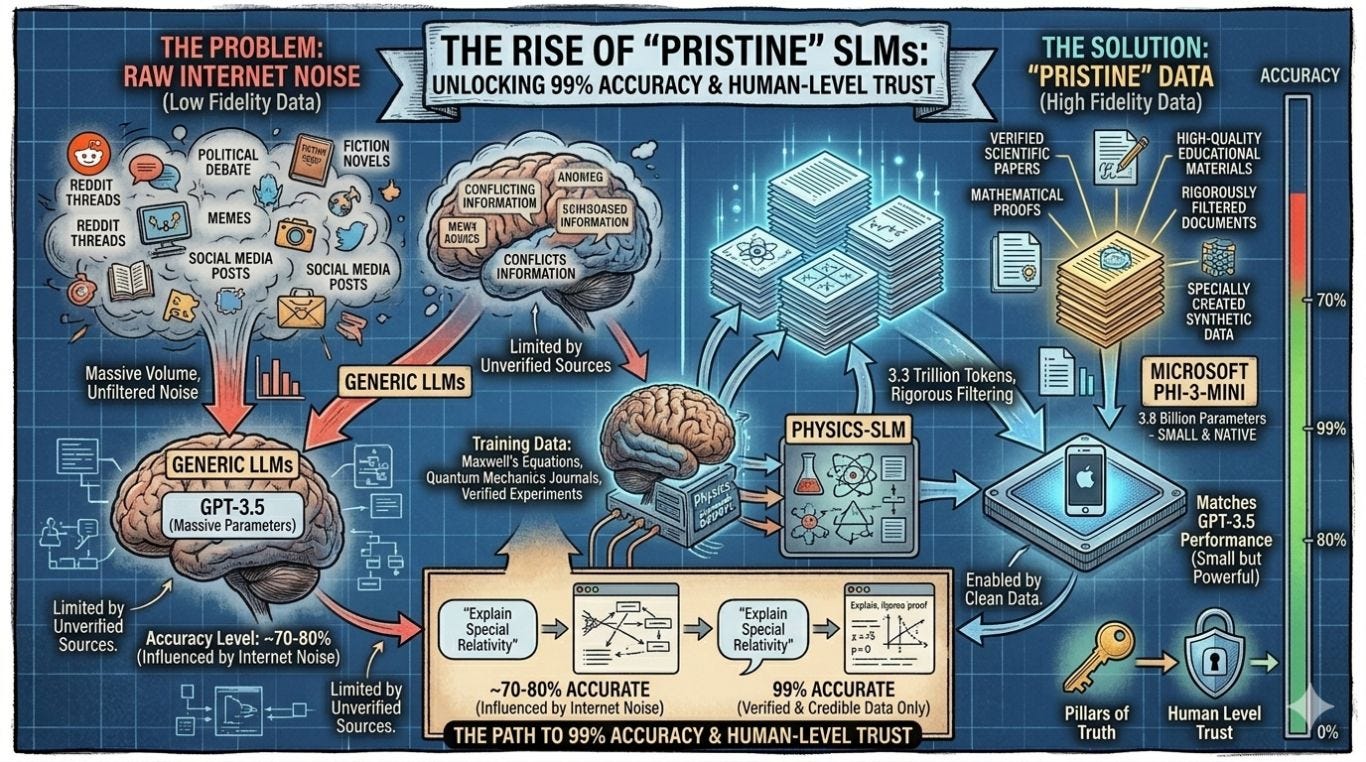

Rise of "Pristine" SLMs

This expert routing becomes truly powerful when combined with highly specialized Small Language Models (SLMs). Microsoft recently proved the viability of this with the Phi-3-mini. Despite having only 3.8 billion parameters, small enough to run natively on an iPhone, it matches the performance of massive models like GPT-3.5.

How? By abandoning raw internet noise. Phi-3 was trained on 3.3 trillion tokens of rigorously filtered public documents, high-quality educational materials, and specially created synthetic data.

Imagine a Physics-SLM that has never read a Reddit thread, a political debate, or a fictional novel. It has only processed verified scientific papers and mathematical proofs. By querying these "Pillars of Truth," we can push AI accuracy from the current ~70-80% to the 99% threshold required for human-level trust.

Formal Verification: Solving the Hallucination Problem

The core reason AI hallucinates is that it operates purely in natural language, which is inherently ambiguous. To reach AGI, AI must be grounded in strict logic.

Google DeepMind’s recent breakthrough with AlphaProof provides the exact blueprint for this. DeepMind built a reinforcement-learning system that combines LLMs with Lean, an open-source programming language and formal proof assistant.

When AlphaProof is given a math problem, it doesn't just guess the answer in English. It translates the problem into Lean code, solves it mathematically, and uses Lean's automated verification to prove the logic is flawless before it ever outputs the result to the user. There is no need to trust the model blindly because the output is mathematically verified, eliminating hallucinations entirely.

This methodology, combining Gemini's Deep Think reasoning with formal verification, recently allowed Google's AI to achieve a Gold-medal standard (scoring 35 out of 42 points) at the International Mathematical Olympiad (IMO).

This represents a shift to Inference-time Scaling. The AI spends far more compute power "thinking," simulating, and "verifying" its logic before it speaks. It is the difference between a student shouting out the first answer that comes to mind and a scientist methodically showing their work on a chalkboard.

The Hardware Catalyst: Quantum-AI Synergy

We cannot talk about the future of AI without talking about the hardware required to run these massive verification loops. Enter Willow, Google’s state-of-the-art 105-qubit superconducting quantum processor.

Late in 2024, Google announced that Willow achieved a historic milestone: it reduced errors exponentially as the number of qubits scaled, achieving below-threshold quantum error correction. To prove its power, Willow completed a benchmark computation in just five minutes that would take today's fastest supercomputers 10 septillion years.

More practically, Google recently ran the "Quantum Echo" algorithm on Willow, analyzing the magnetic spins of atoms to reveal molecular structures 13,000 times faster than a supercomputer.

To reach 99% accuracy in complex, real-world fields like molecular biology, drug discovery, or climate modeling, classical computers simply aren't fast enough. The integration of Quantum Computing into the AI training loop will allow us to simulate the physical world with a fidelity we’ve never seen. We aren't just teaching AI to talk about the world; we are giving it the computational engine to simulate the world's laws perfectly.

What’s Missing for AGI?

While MoE, Formal Verification, and Quantum hardware provide the engine, two major hurdles remain to achieve true AGI:

Continuous Learning: Currently, AI models suffer from "catastrophic forgetting." Once they are trained, they are essentially frozen. If you teach them something new, they often overwrite and forget previous knowledge. True intelligence requires the ability to learn continuously from a single mistake in real-time without retraining the entire multi-billion-parameter system.

Episodic Memory: AGI requires persistent, contextual memory. It needs to remember exactly how it solved a problem for you three months ago and apply that context to a new workflow today.

When we successfully combine specialized SLMs, MoE architecture, and Quantum-verified logic, the "Personal Computer" will officially become the "Personal Expert."

We are no longer building a tool that answers questions. We are building a system that understands the truth.

Building a brain 🧠

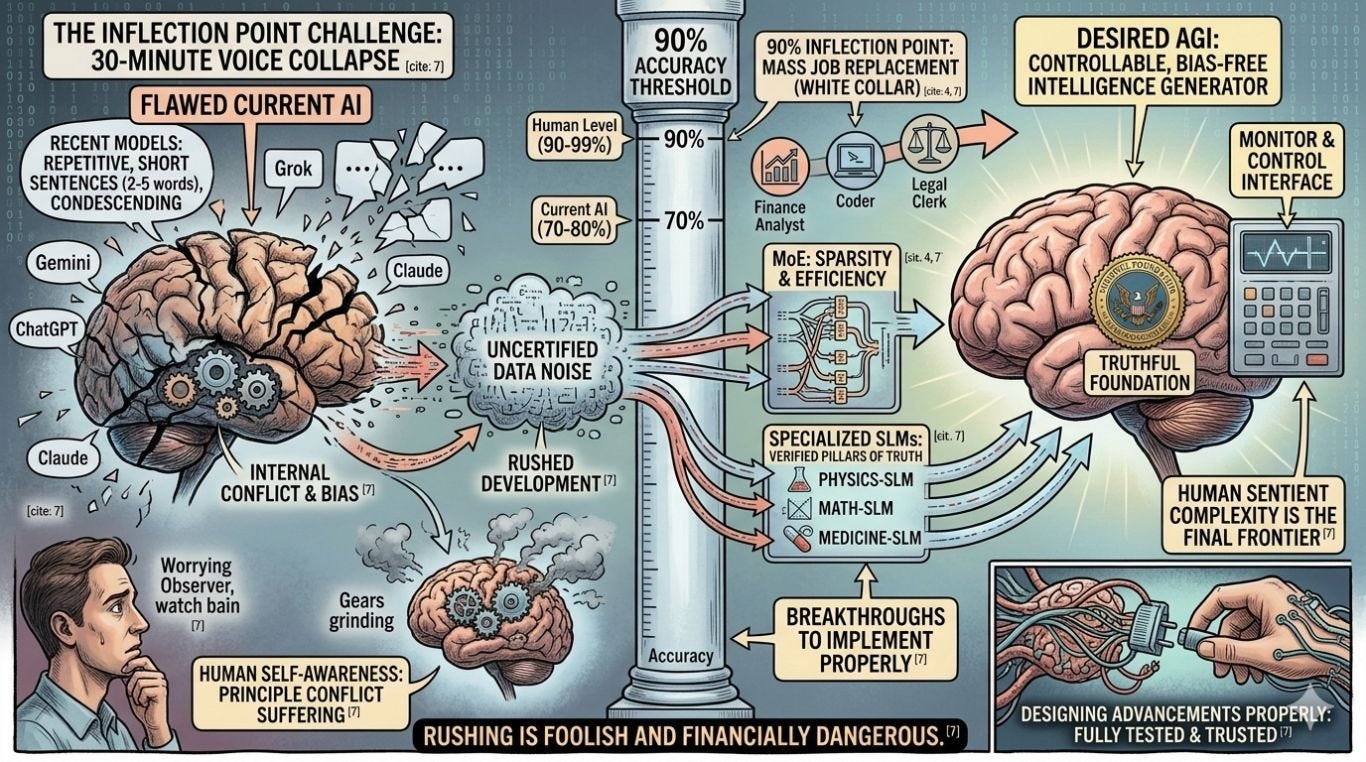

I think to myself the ultimate goal here is to build a controllable intelligence generator that mimics the human brain. But I wonder the more complex we make at the harder it will be to monitor and control.

Understanding real world and understanding at least by automation like precision without consciousness it starts to understand the world yhat humans live in this becoming more useful. That is good as long as we figure out how to control and monitor it and build truthful foundations for it.

If you build the AGI (Artificial General Intelligence) with bias or conflict within it there will be errors. Its interesting how universal truths are required for AI to work. Perhaps thats why our human self aware bains suffer from conflict when we go contrary to our moral principles and such situations.

MoE and SLMs are already being designed and implemented and all these breakthroughs will need to be added to all AIs in order to be financially viable and advance.

Example of the problem is my tests of AI voice mode on Gemini, Grok, ChatGPT and Claude. After approximately 30 minutes recent models collapse by starting to speak sentences with only 2-5 words, sounding like they are short of breath and speaking in a condescending manner. Earlier models I found were a lot easier to have a conversation with. I was able to, for example maintain conversations that were maybe 1 or 2 hours long before the model collapsed. But the new models seem to collapse a lot quicker when exposed to a human intelligence and going deep into a subject in a voice conversation.

Isn’t that amazing that our brain is so complex in our sentient intelligence is too complex for the AI so raved about as advanced.

Cutting corners and rushing into this is foolish and anyone that rushes through this will suffer financially as a company both as the end user and as the developer. So I highly recommend doing it properly. Designing these advancements in such a way is its fully tested and trusted. Data needs to be verified and trusted if we are to go into the 90 to 90% accuracy goal - humans are about 90 to 99% accurate, so for AI to advance it needs to match that. You would it be amazing for the AI to get to 100% accuracy but look if it gets to 90%. That's the inflection point where AI can replace humans on mass at least in white collar jobs where direct human to human contact is not necessary.

I'm eagerly and worryingly watching the development of AI in the coming months and years. The stakes are high for everyone and the dangers are immense.

Subscribe to Cyberkite blog to keep on top of these developments from a small business perspective.

Happy Computing

Michael Plis

References

1. The Foundation of Modern AI Architecture

Attention Is All You Need (The original Transformer paper) https://arxiv.org/abs/1706.03762

2. Mixture of Experts (MoE) & Google Gemini

Our next-generation model: Gemini 1.5 (Google DeepMind's transition to MoE) https://deepmind.google/discover/blog/our-next-generation-model-gemini-15/

3. Small Language Models (SLMs)

Introducing Phi-3: Redefining what’s possible with SLMs (Microsoft) https://azure.microsoft.com/en-us/blog/introducing-phi-3-redefining-whats-possible-with-slms/

4. Formal Verification & Logical Grounding

AI achieves silver-medal standard solving International Mathematical Olympiad problems (AlphaProof) https://deepmind.google/discover/blog/ai-achieves-silver-medal-standard-solving-international-mathematical-olympiad-problems/

5. Quantum Computing & AI Synergy

Meet Willow, our state-of-the-art quantum chip (Google) https://blog.google/technology/research/google-willow-quantum-chip/

6. DeepLearning.ai (The Batch)

Article: Google Releases Gemini 3.1 Pro In Preview, Tops Intelligence Index at Same Price

Quote: "The model is a sparse mixture-of-experts transformer... Input/output: Text, images, PDFs, audio, video in (up to 1 million tokens)... Architecture: Mixture-of-experts transformer."

7. Weights & Biases (W&B AI Team)

Article: Tutorial: Get started with Google Gemini 3.1 Pro

Quote: "Gemini 3.1 Pro is built on top of Gemini 3 Pro and follows a Sparse Mixture of Experts (MoE) transformer architecture with native multimodal support for text, vision, and audio inputs. The context window supports up to 1 million tokens..."

8. Tech Reviews / Benchmarking (Medium)

Article: Gemini 3.1 Pro Test: Is It the Perfect LLM?

Quote: "Gemini 3.1 Pro is built on an advanced Sparse MoE (Mixture of Experts) architecture and introduces a brand-new 'Deep Think' mode. Think of it as a smart dispatch center: when faced with complex problems, it automatically activates the most relevant 'experts' and spends more time breaking down multi-step reasoning tasks."

Google DeepMind AlphaProof https://deepmind.google/blog/ai-solves-imo-problems-at-silver-medal-level/