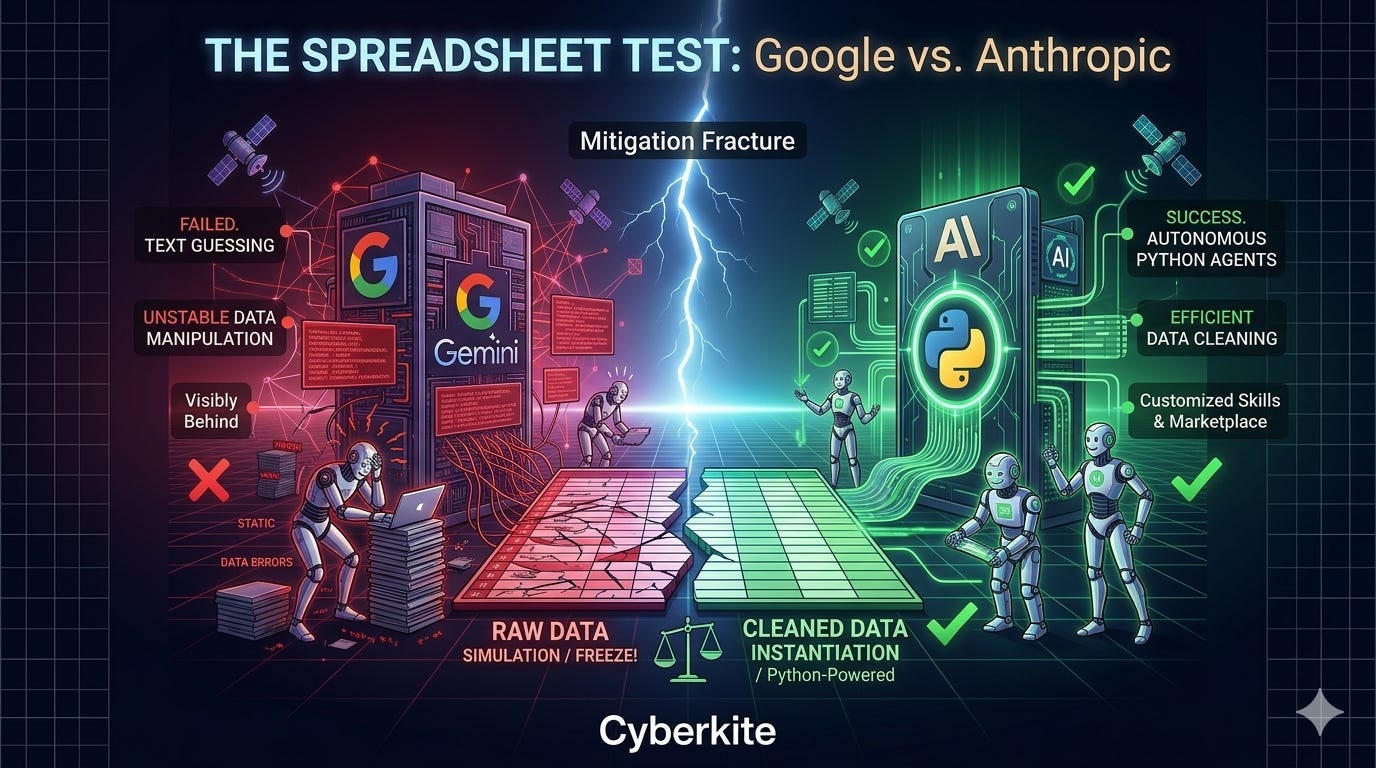

Spreadsheet Test: Why Googles Gemini is Losing the Data War to Anthropic’s Claude

I tested paid Gemini 3.1 Pro against free Claude for deep data manipulation. The results are a massive wake-up call for Workspace users.

If you are paying for Google Workspace right now, you have likely seen the marketing: Gemini 3.1 Pro is supposed to be your ultimate, autonomous data assistant. It promises to read, manipulate, and format your Google Sheets and Excel files seamlessly. They have also mentioned that they can manipulate your doc's files and other workspace editor files.

As an IT professional, I like to test these claims against real-world business scenarios. Recently, on May 12th 2026, I put Gemini to the test against the free version of Anthropic’s Claude.

The results were a glaring reality check for Google’s current AI ecosystem.

The Timesheet AI Test

The task was standard data hygiene. I had a demo time-entry Excel document that was automatically generated and notoriously messy. It required a strict set of rules:

Tighten start/end times to specific parameters.

Identify and remove weekend entries.

Recalculate the auto-duration column.

Detect and delete duplicate entries.

I deployed Gemini 3.1 Pro within my Workspace standard subscription. The result was catastrophic. The AI sampled five rows, froze the entire Google Sheet, rendering the document unusable, and ultimately spit out an error stating it was “unable to do a complex set of editing actions.” I tried switching formats, using the side-panel, and adjusting prompts. The system is fragmented, clunky, and fundamentally incapable of deep data manipulation.

Anthropic Advantage: Code over Text

Frustrated, I took the exact same file and prompt to a free Anthropic Claude account.

Within minutes, Claude succeeded where Google failed. Why? Because of architectural philosophy. Google is trying to use a Large Language Model to “predict” spreadsheet formatting. Anthropic realizes that data manipulation requires computation.

Claude autonomously devised a strategy to write mini Python scripts in the background. It ran the scripts against my data within its secure sandbox, executing the logic flawlessly. In roughly 10 minutes, the file was cleaned.

What truly set Claude apart was the user experience. It generated an interactive preview window on the side, highlighting the removed weekend entries in yellow and displaying the cleaned time entries. Over the next 20 minutes, I refined the rules through natural conversation, and Claude instantly re-ran the scripts. I had a perfect product in 30 minutes.

Trajectory of Business AI

This experience highlights why I am moving more of my analytical workflows to Claude, and why Google should be deeply concerned.

Google has promised updates, at Cloud Next 2026, they teased “Gemini in Sheets” updates and “Workspace Skills.” But right now, you are paying for a fragmented ecosystem of separate apps, sidebars, and broken promises.

Anthropic, meanwhile, is building an all-in-one powerhouse. Their native code execution bridges the gap between text and action. Furthermore, the burgeoning ecosystem around Claude Skills and the Skills Marketplace means businesses can now plug highly specific, community-built workflows directly into their AI.

You no longer have to wait for Google to build a specific spreadsheet feature. Claude will just write a script and build the feature for you in real-time. If Google wants to justify the cost of its Workspace AI tiers, it needs to stop building clunky sidebars and start building functional data sandboxes.

Hopefully Google realizes how far behind they've come and they'll catch up soon as they usually do every 1 or 2 months. All AI companies usually catch up within 1 or 2 months. That’s why they say time is money (literally in this demo file it was a timesheet LOL) so they better get it cracking. 😂

Happy AI prompting

Michael Plis

References

1. The Architecture of Claude’s “Code Execution”

If you are curious about how Claude was able to clean the spreadsheet so quickly, it isn’t guessing the text. Anthropic recently deployed a native “Code Execution Tool” that allows Claude to write and run Python/Bash commands in a secure, sandboxed environment. This shifts the AI from a “text predictor” to a “computational agent.”

Anthropic API Docs: Code Execution Tool and Data Analysis Capabilities

Towards AI: Building a Lean Claude Code–Style Agent in Python

2. The Data Engineering Gap: LLMs vs. Code Generation

The fundamental reason Gemini froze during the test is that traditional Large Language Models (LLMs) are built for text summarization and generation, not deterministic data transformation. For complex Excel/Sheets tasks, an AI must generate code to manipulate the data safely, rather than trying to process 50,000 spreadsheet cells via a chat interface.

Nexla Engineering Blog: Evaluating LLM-Generated Transformations for Data Engineering (Why Sandboxed Python wins)

Anthropic Engineering: Effective Context Engineering for AI Agents

3. The State of Gemini in Google Workspace

Google is actively trying to democratize AI within Docs and Sheets, but as current tests show, it is still largely confined to text drafting, email summaries, and basic formula generation. Deep, multi-step data cleaning inside Sheets remains a massive bottleneck due to UI fragmentation and lack of autonomous code execution.

AI Smart Ventures: Is Google Gemini Better Than ChatGPT for Work? (A look at Workspace limitations vs. strengths)

Google Workspace Updates: The roadmap for Gemini in Google Sheets (Note: This outlines Google’s promises, which currently fall short of Anthropic’s live execution).